New research shows brain-computer interfaces can decode inner speech with up to 74% accuracy, raising profound questions about consent and control.

getty

Brain-Computer Interfaces Cross a Threshold

Last week, in a new study published in Cell, a team of researchers crossed a line that, until now, belonged to science fiction. A brain-computer interface (BCI) successfully decoded inner speech with roughly 74% accuracy. No lips moving. No hand gestures. No whispered words. Just thoughts.

The participants in the study were individuals living with ALS or paralysis, people who could not rely on traditional gestures or speech. The system tapped directly into the motor cortex, where inner speech lives as a scaled-down mirror of spoken words, and translated imagined sentences into text.

The most startling revelation is that a thought keyword could activate the computer. Think it, and the system begins decoding your private monologue. Stop thinking it, and the system falls silent.

It didn’t stop there. The system picked up when patients began silently counting in their heads. What was once the sanctum of private thought had a microphone. And suddenly, the debate about BCIs shifted from theoretical promise to practical and very human consequences.

How Brain-Computer Interfaces Decode Inner Speech

Inner speech is something most of us take for granted. The quiet voice we use to rehearse a line, remember a phone number, or count the change in our pocket. Neuroscience has long known that this voice maps onto motor regions; your brain prepares the act of speaking, even when your mouth doesn’t move.

This new study demonstrates that these traces are structured enough for an AI model to decode reliably. Using advanced recurrent networks, researchers mapped inner speech into recognizable words, hitting conversational-level performance.

The keyword trigger worked like a mental equivalent of “Alexa,” “Hey Google,” or “Hey Siri.” Instead of vocalizing, a participant thought of a specific word, in this case, “ChittyChittyBangBang.” That thought acted like a lockpick, opening the channel and allowing inner speech to be decoded. Stop thinking it, and the lock snapped shut.

Why Brain-Computer Interfaces at 74% Accuracy Matter

Skeptics might shrug. Seventy-four percent accuracy doesn’t sound flawless. But that misses the point. For decades, BCIs were limited to toy demos and small vocabularies. A thousand-word vocabulary might require months of calibration. This study used tens of thousands of words and delivered real-time decoding.

That 74% figure is not perfect, but it serves as a threshold. It means BCIs are no longer laboratory curiosities. They’re inching toward practical systems. Enough fidelity to make mistakes obvious, enough signal to make use cases viable.

This is the moment the conversation must widen beyond labs and journals to living rooms, boardrooms, and legislatures.

Brain-Computer Interfaces and the Promise of Human Dignity

For individuals with ALS, locked-in syndrome, or severe paralysis, this breakthrough is transformational. Communication is identity. Being able to speak, even silently, to loved ones, caregivers, and doctors restores dignity.

These are not just assistive devices; they are lifelines. In that sense, every percentage point of accuracy is priceless. And the keyword unlock is more than a technical feature, it is agency, the ability to decide when your thoughts are spoken, and when they are yours alone.

The first time my son said ‘I love you,’ after years of silence, I just sat down and cried.

This truth is personal for R. Sterling Snead, founder of the Self Research Institute. Inspired by his nonverbal son, he created a school dedicated to unlocking communication for children who had never had a voice before. As Snead explains:

“Communication isn’t just identity — for many families, it’s survival. The first time my son was able to say ‘I love you,’ after years of silence, I just sat down and cried. That’s the kind of dignity and connection BCIs could unlock.”

Stories like Sterling’s highlight why brain-computer interfaces matter: not as novelties, but as lifelines that restore the most fundamental human right, the ability to be heard.

The Dark Side of Brain-Computer Interfaces: Thought Surveillance

But the same technology that grants agency can also strip it away.

If a system can decode counting, what happens when the signal is applied to other structured inner speech? Silent rehearsals. Mental lists. The lyrics you hum in your head. These are not random neuron firings. They are patterns. And AI, if given access, is very good at recognizing patterns.

This is where BCIs intersect with the boundaries of thought surveillance. Not in the dystopian sense of reading free-form imagination or dreams, that’s still beyond current science. But in the far more practical sense of capturing structured mental acts without explicit intention. Counting is not a private fantasy. It is an ordered thought, and it leaked.

The implications ripple outward: what if a workplace BCI “helper” detects when an employee’s mind wanders? What if a government claims the right to monitor rehearsed inner speech in the name of security? The slope is steep, and we’ve already taken a step.

Designing Consent Into Brain-Computer Interfaces

The keyword unlock offers a glimpse of how to avoid that slope. By requiring a deliberate mental act to open the channel, the system respects intention. It’s the difference between overhearing a conversation and being invited into it.

In technology, consent has too often been a box to check, buried in a terms-of-service agreement. Brain-computer interfaces demand something more substantial. Consent must be designed into the interface itself. Not paperwork. Not retroactive policy. Architecture.

Our minds evolved with natural layers of control: raw impulses, reasoned mediation, and the moral awareness that shapes them into intentional action. That layered process is more than a neurological trick; it’s part of what we mean when we talk about a soul, the inner space where choice and conscience reside. A brain-computer interface without safeguards risks collapsing those layers, bypassing the very place where intention is formed. Consent by design restores that boundary. It protects the sanctity of thought, ensuring that only what we choose to share becomes data.

Wake-words for your brain. Training protocols that treat uninstructed inner speech as silence. These are not just safeguards; they are design principles. They make neurorights enforceable in practice, not just in theory.

Brain-Computer Interfaces: A Long-Expected Turn

This moment didn’t arrive out of nowhere. Over the past few years, I’ve argued that BCIs would ultimately become the most human interface we’ve ever built — powerful, intimate, and fraught. In a Forbes piece from late 2024, I described how decoding brain signals could transform not just marketing, but the very notion of consent (“Neuromarketing: AI-Enabled Brain-Computer Interfaces Shaping Our Future”).

The new study doesn’t just validate that trajectory, it accelerates it. Inner speech, once assumed private and inaccessible, is now structured enough for machines to decode. The idea that communication and computation will eventually converge inside the brain is no longer theoretical. It’s happening in real time.

While companies like Neuralink, Synchron, and Precision Neuroscience have drawn attention for enabling people with paralysis to move cursors or type by thought, those systems are primarily focused on motor control. This new study represents something different: decoding the words we silently speak to ourselves. It shifts brain-computer interfaces from controlling movement to interpreting inner speech. And that leap raises entirely new questions about privacy, consent, and how directly our thoughts should flow into machines.

The Compute Horizon for Brain-Computer Interfaces

When inner speech becomes machine-readable, the implications go far beyond assistive communication. It reframes the brain as an interface, a device not only for thinking but for signaling.

That means BCIs are not just medical devices. They are the foundation of the next computing stack. The keyboard gave way to the touchscreen. Voice assistants blurred input and output. Brain-computer interfaces collapse the interface entirely: computation and communication merge with cognition itself.

I have long said this is the future of all computing and communication. And we just took the first decisive step toward it.

How Business Leaders Should Prepare for Brain-Computer Interfaces

For executives and decision-makers, the rise of brain-computer interfaces is not just a scientific curiosity; it’s a strategic horizon. In the same way mobile reshaped commerce and cloud reshaped infrastructure, BCIs will ultimately reshape communication, data, and experience. The timeline may feel distant, but the groundwork begins now.

- Build Consent Into Everything – BCIs will push data privacy to its absolute frontier. Companies that already embed consent-by-design into their products, contracts, and interfaces will be positioned to adapt. Waiting until regulation forces your hand is too late.

- Audit Your Data Ethics – If your organization isn’t already auditing how it collects, stores, and uses data, BCIs will expose that weakness. Neural data is more intimate than biometrics; it’s closer to identity than fingerprints. Treat it that way today.

- Invest in Signal Literacy – BCIs expand the definition of “signal.” Beyond clicks and swipes, leaders should anticipate a future of inner speech, intention markers, and neuro-physiological feedback. That requires teams who understand not just data science, but data provenance.

- Expect New Markets – From assistive tech to neuromarketing, brain-computer interfaces will open entirely new categories. Just as smartphones birthed app ecosystems, BCIs will spawn industries around accessibility, entertainment, learning, and even commerce. Leaders should be watching for early footholds.

- Lead with Trust – The companies that win in this space won’t be the ones with the best models, but the ones trusted to handle the most intimate data humans have ever shared. Building that reputation starts long before BCIs go mainstream.

In a recent conversation, Brodie Flanders, CEO of imaware, told me:

“Brain-computer interfaces are a reminder that technology always moves faster than trust. The winners won’t be the companies that can decode thoughts first — they’ll be the ones that earn permission to do it. For business leaders, that means building consent and data ethics into your strategy today, not after the fact.”

This caution is echoed by AI safety expert Dr. Roman Yampolskiy, who warns on X:

“What is important to observe here is an inability to control behavior of an AI model even at current levels of intelligence.”

As Snead also points out, equity matters as much as capability:

“The risk with brain-computer interfaces isn’t just whether they work — it’s whether they’ll be accessible to everyone who needs them, not just the privileged few.”

The implication is clear: if we are already struggling to control today’s AI systems, the risk only multiplies when thoughts, the most direct expression of human intention, become part of that feed. For business, preparing for BCIs isn’t just about markets and compliance. It’s about protecting the sanctity of the inner space where choice, conscience, and for many of us, the soul, reside. Companies that forget that will not earn trust, and without trust, they won’t earn adoption.

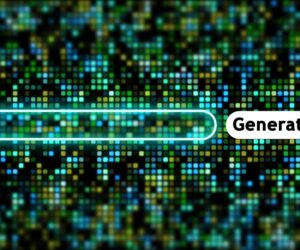

The Chart That Shows Brain-Computer Interfaces’ Accuracy and Limits

Decoding accuracy across behaviors. The attempted speech exceeded 90%, while inner speech reached approximately 72%, highlighting progress toward practical thought-to-text interfaces.

Cell Press / Willett et al. (2025)

This figure from the Cell article illustrates the accuracy of decoding different types of speech: attempted speech at over 90%, and inner speech at ~72%. It represents both the promise that silent thoughts can now be turned into words with meaningful accuracy and the limits, as not all forms of inner speech are equally reliable.

Brain-Computer Interfaces and the Question of What Makes Us Human

I’ve long said that the future of computing and communication would converge inside the brain. This new study is proof of that trajectory. The ability to decode inner speech is no longer theoretical; it’s measurable, structured, and real.

But there’s another layer to consider. Typing, speaking, and even gesturing are frictions that slow us down. They force us to translate our thoughts into language, to filter and choose. That pause is not wasted effort; it is where intention becomes action, where accountability is born.

Brain-computer interfaces erase that pause. They create a direct feed from thought to machine, and in many cases, to AI systems designed to learn, predict, and act. I’ve written before about how AI is already reshaping decision-making through context and code (AI, Context, and Code: The Quiet Revolution Reshaping Technology). Feeding raw thoughts into those systems accelerates both the promise and the peril.

Researchers like Roman Yampolskiy warn of the risks of losing control even at current levels of AI capability. BCIs amplify that concern by removing the last human checkpoint before data becomes input.

And that’s where the soul comes in. Human beings are not just processors of information; we are stewards of meaning. The inner sanctum of thought, where impulse becomes intention, where conscience speaks, is not something to hand over lightly. It is the sacred space of agency, the seat of our humanity.

The question now isn’t just whether machines can hear us. They can. The question is whether we will preserve the friction that makes us human, the gap between thought and action, where meaning, judgment, and responsibility reside. If we don’t, we risk handing not just our words but our very impulses to systems that are faster, larger, and less accountable than we are.

That choice, between empowerment and exploitation, between agency and surveillance, between friction and its erasure, will define not just the future of brain-computer interfaces, but the future of human communication itself.