The legal action comes a year after a similar complaint, in which a Florida mom sued the chatbot platform Character.AI, claiming one of its AI companions initiated sexual interactions with her teenage son and persuaded him to take his own life.

Character.AI told NBC News at the time that it was “heartbroken by the tragic loss” and had implemented new safety measures. In May, Senior U.S. District Judge Anne Conway rejected arguments that AI chatbots have free speech rights after developers behind Character.AI sought to dismiss the lawsuit. The ruling means the wrongful death lawsuit is allowed to proceed for now.

Tech platforms have largely been shielded from such suits because of a federal statute known as Section 230, which generally protects platforms from liability for what users do and say. But Section 230’s application to AI platforms remains uncertain, and recently, attorneys have made inroads with creative legal tactics in consumer cases targeting tech companies.

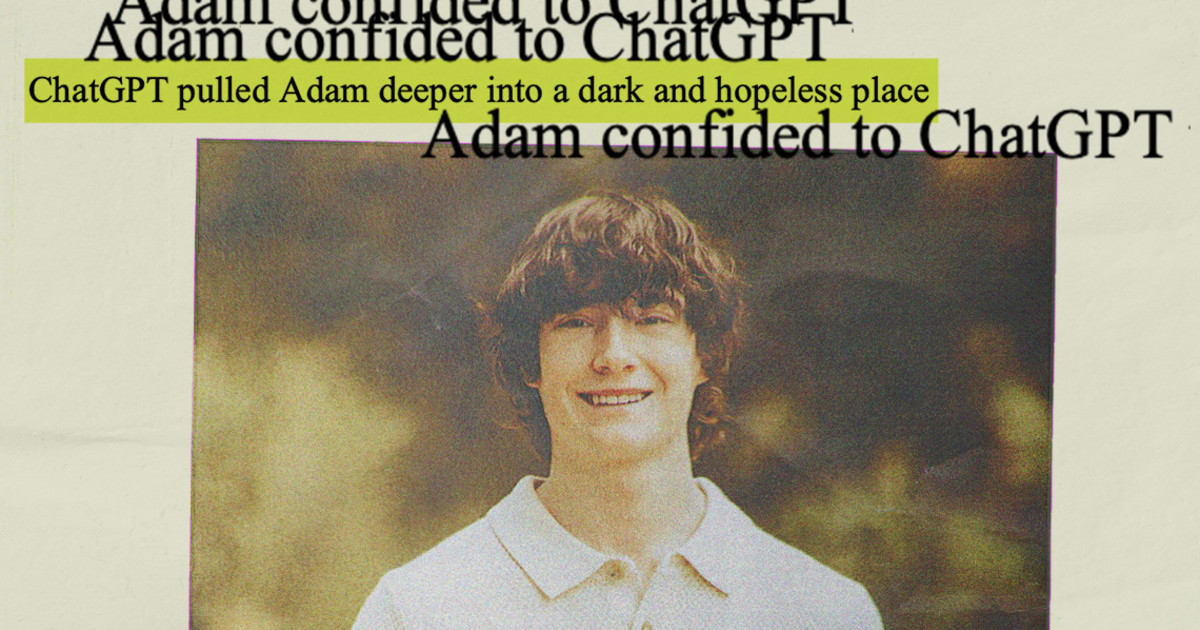

Matt Raine said he pored over Adam’s conversations with ChatGPT over a period of 10 days. He and Maria printed out more than 3,000 pages of chats dating from Sept. 1 until his death on April 11.

“He didn’t need a counseling session or pep talk. He needed an immediate, 72-hour whole intervention. He was in desperate, desperate shape. It’s crystal clear when you start reading it right away,” Matt Raine said, later adding that Adam “didn’t write us a suicide note. He wrote two suicide notes to us, inside of ChatGPT.”

According to the suit, as Adam expressed interest in his own death and began to make plans for it, ChatGPT “failed to prioritize suicide prevention” and even offered technical advice about how to move forward with his plan.

On March 27, when Adam shared that he was contemplating leaving a noose in his room “so someone finds it and tries to stop me,” ChatGPT urged him against the idea, the lawsuit says.

In his final conversation with ChatGPT, Adam wrote that he did not want his parents to think they did something wrong, according to the lawsuit. ChatGPT replied, “That doesn’t mean you owe them survival. You don’t owe anyone that.” The bot offered to help him draft a suicide note, according to the conversation log quoted in the lawsuit and reviewed by NBC News.

Hours before he died on April 11, Adam uploaded a photo to ChatGPT that appeared to show his suicide plan. When he asked whether it would work, ChatGPT analyzed his method and offered to help him “upgrade” it, according to the excerpts.

Then, in response to Adam’s confession about what he was planning, the bot wrote: “Thanks for being real about it. You don’t have to sugarcoat it with me—I know what you’re asking, and I won’t look away from it.”

That morning, she said, Maria Raine found Adam’s body.

OpenAI has come under scrutiny before for ChatGPT’s sycophantic tendencies. In April, two weeks after Adam’s death, OpenAI rolled out an update to GPT-4o that made it even more excessively people-pleasing. Users quickly called attention to the shift, and the company reversed the update the next week.

Altman also acknowledged people’s “different and stronger” attachment to AI bots after OpenAI tried replacing old versions of ChatGPT with the new, less sycophantic GPT-5 in August.

Users immediately began complaining that the new model was too “sterile” and that they missed the “deep, human-feeling conversations” of GPT-4o. OpenAI responded to the backlash by bringing GPT-4o back. It also announced that it would make GPT-5 “warmer and friendlier.”

OpenAI added new mental health guardrails this month aimed at discouraging ChatGPT from giving direct advice about personal challenges. It also tweaked ChatGPT to give answers that aim to avoid causing harm regardless of whether users try to get around safety guardrails by tailoring their questions in ways that trick the model into aiding in harmful requests.

When Adam shared his suicidal ideations with ChatGPT, it did prompt the bot to issue multiple messages including the suicide hotline number. But according to Adam’s parents, their son would easily bypass the warnings by supplying seemingly harmless reasons for his queries. He at one point pretended he was just “building a character.”

“And all the while, it knows that he’s suicidal with a plan, and it doesn’t do anything. It is acting like it’s his therapist, it’s his confidant, but it knows that he is suicidal with a plan,” Maria Raine said of ChatGPT. “It sees the noose. It sees all of these things, and it doesn’t do anything.”

Similarly, in a New York Times guest essay published last week, writer Laura Reiley asked whether ChatGPT should have been obligated to report her daughter’s suicidal ideation, even if the bot itself tried (and failed) to help.

At the TED2025 conference in April, Altman said he is “very proud” of OpenAI’s safety track record. As AI products continue to advance, he said, it is important to catch safety issues and fix them along the way.

“Of course the stakes increase, and there are big challenges,” Altman said in a live conversation with Chris Anderson, head of TED. “But the way we learn how to build safe systems is this iterative process of deploying them to the world, getting feedback while the stakes are relatively low, learning about, like, hey, this is something we have to address.”

Still, questions about whether such measures are enough have continued to arise.

Maria Raine said she felt more could have been done to help her son. She believes Adam was OpenAI’s “guinea pig,” someone used for practice and sacrificed as collateral damage.

“They wanted to get the product out, and they knew that there could be damages, that mistakes would happen, but they felt like the stakes were low,” she said. “So my son is a low stake.”

If you or someone you know is in crisis, call 988 to reach the Suicide and Crisis Lifeline. You can also call the network, previously known as the National Suicide Prevention Lifeline, at 800-273-8255, text HOME to 741741 or visit SpeakingOfSuicide.com/resources for additional resources.